Using your keyword in the text’s body multiple times to increase your keyword density is essential to indicate to Google your article’s subject matter.

You should not overuse keywords; otherwise, Google may think you are trying to manipulate the search results. Too many keywords and your web page might suffer a penalty.

The big question is; What is normal?

Instead of having a fixed percentage, it is thought that Google will calculate the density of a keyword or keyword phrase for a particular query by comparing it with other highly-ranked pages on the same topic using TF-IDF.

TF-IDF means Term Frequency–Inverse Document Frequency.

Despite this, some SEO tools still push having your main keyword in the title, content, meta description, and image alt. For example:

- Moz recommends using your keyword in each of these but also balances it by highlighting keyword stuffing.

- SEMrush, I think, does it slightly better as it provides statistics such as the TF-IF of the top results for your keyword for comparison purposes.

But be careful.

From experience, I know that when I focus too much on adhering to SEO Tools’ recommendations, the quality of my writing goes down. Write naturally, and then perhaps tweak later after checking the page’s performance in your Search Console if required.

My experience is backed up by Google, which says that you should ignore keyword density, which I will discuss further in a moment.

Will higher keyword density improve your Google Rankings?

I took an in-depth look at what the Googlers \ Experts had to say:

Moz on-page optimization tool encourages the use of keywords.

When optimizing a page for a specific keyword in the Moz On-Page Grader, the tool encourages you to use your target keyword at least once in the Title, Body, Meta Description, URL, and Image Alt Tag.

They also alert you to keyword stuffing.

If you use keywords too many times in the document text, search engines may tag your page for keyword stuffing (a form of search engine spam), which can hurt your rankings in the search engines, as well as appear spam-like in the search results to potential visitors.

As I said previously, and as you will read further below, a more natural approach is perhaps needed.

Google: Keyword Stuffing is a sign of low-quality content

The Google Quality Raters Guidelines sets out the type of characteristics that denote the lowest quality of webpage or content. One of the leading indicators of low-quality content is keyword stuffing.

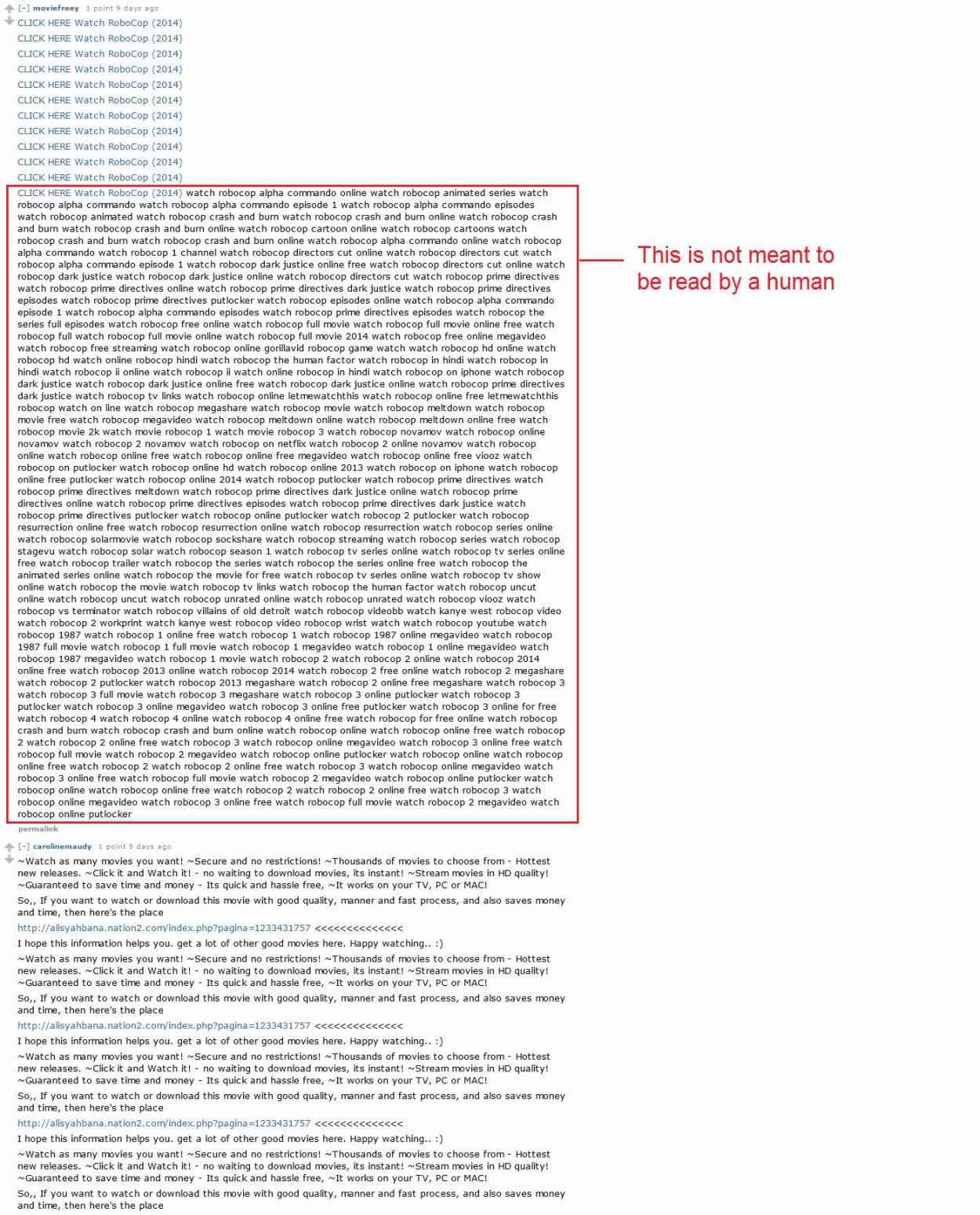

For the absolute lowest quality page, the result of keyword stuffing will be more akin to jibberish. Think of a block of text that is not meant to be read by a person. An extreme example is referenced in the Raters Guide, which you can see in the screenshot below:

While this doesn’t shed much light on whether keywords are a ranking factor, it does reaffirm Matt Cutts’s statement that keyword stuffing can cause a penalty (see later in the article).

That being said, John Mueller said in June 2018 that even if they ignore the keyword stuffing, there is “sometimes still enough value to be found elsewhere” to help it rank. Furthermore, keyword stuffing shouldn’t result in the removal of the page.

You’re right @JohnMu, but that amount of keyword repetition makes my eyes bleeding 😀

— Gianluca Fiorelli (@gfiorelli1) June 20, 2018

Mueller recommends you ignore Keyword Density - “Search engines have moved on.”

In an English Google Webmaster Central office-hours hangout on October 24, 2014, John Mueller said:

Keyword density, in general, is something I wouldn’t focus on. Make sure your content is written in a natural way. Humans, when they view your website, they’re not going to count the number of occurrences of each individual word.

And search engines have kind of moved on from there over the years as well. So they’re not going to be swayed by someone who just has the same keyword on their page 20 times because they think that this, kind of, helps search engines understand what this page is about.

Essentially, we look at the content. We try to understand it, as we would with normal text. And if we see that things like keyword stuffing are happening on a page, then we’ll try to ignore that and just focus on the rest of the page that we can find.

John Mueller, Google Webmaster Trends Analyst, was asked in an English Google Webmaster Central office-hours hangout about keyword density.

In particular, he was asked whether using exact match anchor text in navigation links or headers can be bad for SEO even when a site has unique content and the density of keywords is natural. Google confirmed that you should use anchor text that makes sense for your users.

He then talked about keyword density and how things have moved on from there. I think it is safe to take his comments, meaning Google now uses TF-IDF, although he doesn’t explicitly say this.

You can watch the entire conversation in the video below:

Matt Cutts wants SEOs to stop obsessing about keyword density.

In response to a question about keyword density in 2011, Matt Cutts confirmed that there is no hard and fast rule. It varies depending on the keyword and topic. If you keyword stuff, then it could hurt your position in the rankings.

Matt Cutts, the former head of Webspam at Google, was asked the following question:

What is the ideal keyword density: 0.7%, 7%, or 77%? Or is it some other number?

Here are some of the most important points contained in Matt Cutts reply:

A lot of people think there’s someone’s recipe, and you can just follow that like you know baking cookies, and if you follow it to the letter, you’ll rank number one, and that’s just not the way it works.

So if you think that you can just say, okay, I’m going to have 14.5% keyword density or seven percent or seventy-seven percent, and that will mean I’ll rank number one, that’s really not the case.

If you continue to repeat stuff over and over again, then you’re in danger of getting into keyword stuffing.

If you’re an experienced SEO, someone’s just like trying to get the same phrase on the page as many times as possible because it just looks fake, and that’s the sort of area in that niche where we try to say okay rather than helping let’s make that hurt a little bit.

So I would love it if people could stop obsessing about keyword density; you know it’s going to vary by area, it’s going to vary based on you know what other sites are ranking it. There’s not a hard and fast rule and anybody who tells you that there is a hard and fast rule.

The points made by Matt Cutts are interesting. Even as far back as 2011, Google seems to be looking beyond a set keyword density. It will vary based on the density used by other sites already ranking. Furthermore, if you add too many keywords, they will penalize your site.

It is best if you write normally. This is easier said than done, though.

Frequently Asked Questions

What is Keyword Density?

Keyword density relates to the number of specific keywords in a document relative to the number of words in that document.

How do you calculate Keyword Density?

There are several formulas to calculate the keyword density.

Let me take a straightforward formula first:

- (Number of keywords / Total number of words on the page) * 100

However, this does not do the concept justice. Google is quite a bit smarter than that and has even moved way beyond this next formula with TF-IDF.

- Keyword Density = (Nkr / (Tkn - (Nkr x (Nwp - 1)))) x 100

Where:

- Nkr = how many times you repeated a specific key-phrase

- Nwp = number of words in your key-phrase

- Tkn = total words in the analyzed text

But, as I have indicated in the article, discussions of keyword density in this way are now irrelevant. Google has moved on.

What tools can you use to check keyword density?

Many keyword research tools can help you decide on your primary and related keywords. Many will also check whether your keyword or phrase is optimized for Google, offer up semantic keywords or particular keyword phrases, and overall check your content quality.

Here are some of the most popular:

- SEMrush

- Cognitive SEO

- Moz

- Ahrefs

There are many more SEO tools, but these are the main ones.